Evidence-base aging: when verified findings stop holding

Contents

The verdict was Mostly True. Then ChatGPT happened.

A claim from the Lenz corpus, verified last week:

The verdict is grounded in the strongest available evidence — MIT (2018), PubMed (2025), GWU/PLOS One (2023). The interesting thing about that evidence is that almost all of it predates the LLM era.

When a verdict's evidence base predates a structural change in the field, the verdict can hold today and still age out tomorrow. That's evidence-base aging. It's a verdict mechanic worth naming. This piece walks through one claim, two parallel cases in other domains, and what that means for reading a verdict in 2026.

The humans-vs-bots claim — what the verification found

The verdict on this claim is Mostly True at 7/10, with 7/10 confidence. That's the answer to the claim as stated. The trace is more interesting than the label.

What the argument trace surfaced:

- MIT News (2018) — controlled tests removing bots from datasets didn't eliminate the speed advantage that false content has over true content. Human behavior — sharing, signaling, in-group dynamics — was the dominant vector even before automation became sophisticated.

- PubMed (2025) — across analyzed social-media chatter on global events, ~80% of content is human-generated; ~20% bots. Headline ratio for the verdict.

- GWU / PLOS One (2023) — in narrow political contexts, bots under 1% of the user population can generate 30%+ of the volume on a single topic. Disproportionate visibility, not majority spread.

Those three findings together explain the Mostly True verdict honestly: humans drive most spread in aggregate, but bots dominate specific narrow contexts.

Worth distinguishing here from a separate claim that often gets conflated with this one:

Automated bots account for more than 50% of global internet traffic.Mostly True

Bots are a majority of overall internet traffic now (~51% in 2024, per Imperva's 2025 Bad Bot Report). But web-traffic share and misinformation-spread share are different metrics — and the verification is careful not to conflate them.

What the trace also surfaces — and where this post turns:

- Most supportive studies predate the LLM era. MIT 2018, GWU 2023. Even the 2025 PubMed paper draws on data from 2023 and earlier.

- AI chatbots now produce false content at roughly 35% error rates — nearly double the rate from a year prior.

- Crisis-context exception — during fast-moving events, roughly 47% of false content originates from bot or anonymous accounts. The human-majority shrinks substantially in crisis.

Full trace:

The Mostly True verdict reflects current evidence faithfully. The temporal caveat is the part the verdict label doesn't carry on its own: this verdict is about to age.

Evidence-base aging — naming the mechanic

Evidence-base aging is what happens when a True or Mostly True verdict rests on evidence that predates a structural change in the field — and the change is now actively reshaping the phenomenon the verdict describes.

It's not the verdict being wrong. The verdict was correct given the evidence available. It's the evidence base getting older than the world it describes.

Two parallel cases from the Lenz corpus, in different domains, show the same mechanic:

Case A — Renewable energy cost trajectories.

A verdict on "solar and wind power are the cheapest sources of new electricity generation in most major markets" rests on cost data that updates yearly — Lazard, BloombergNEF, IEA, EIA, and Wood Mackenzie all publish fresh LCOE comparisons annually. The 2025 verdict lands Mostly True at 8/10, on the strength of continued price declines (2–11% projected for 2025). A 2020 verdict on the same claim would have cited a different evidence vintage and supported different precise statements about which technologies and which markets clear the bar. Same mechanic: evidence ages, verdict refines.

Case B — Developer productivity with AI coding tools.

A verdict on "AI coding tools improve developer productivity by X%" rests on studies from a tool generation that's already obsolete. The 2023 evidence and the 2026 evidence yield meaningfully different productivity figures. Same mechanic, faster cycle: evidence ages within months, not years.

Three cases, three domains — online information dynamics, energy economics, AI labor. The mechanic isn't specific to any one of them. It's a structural property of how verification interacts with fast-moving fields.

Reading a verdict when the evidence base may be aging

For anyone citing a verified finding — students writing a thesis, journalists referencing a study, researchers building on prior work — three things to check on any verdict in a fast-moving field:

- The date of the underlying evidence, not just the date of the verification. A verdict issued in May 2026 can rest on a study published in 2018. The claim page surfaces both. The gap between them is the part to think about.

- Structural changes in the field since the evidence was gathered. The LLM-era break is the cleanest current example: pre-2022 evidence on AI-generated content needs caveats now. Similar shifts apply to pre-pandemic respiratory-disease evidence, pre-Inflation Reduction Act renewable economics, pre-GDPR European data-privacy claims. If a structural shift has occurred between the evidence date and your citation date, the verdict needs reading with that in view..

- Confidence vs. evidence-base age. A 7/10 confidence on 2018 evidence and a 7/10 confidence on 2025 evidence are not the same epistemic situation in a fast-moving field. The first is doing more work to overcome temporal distance; read it accordingly.

Citation-grade defensibility doesn't end at the verdict label. It includes knowing when the verdict is durable and when it's about to move.

Where humans and AI verdicts already disagree

The humans-vs-bots verdict is one data point. The broader question — when do verdicts and the humans reading them disagree? — has a live answer at lenz.io/pulse.

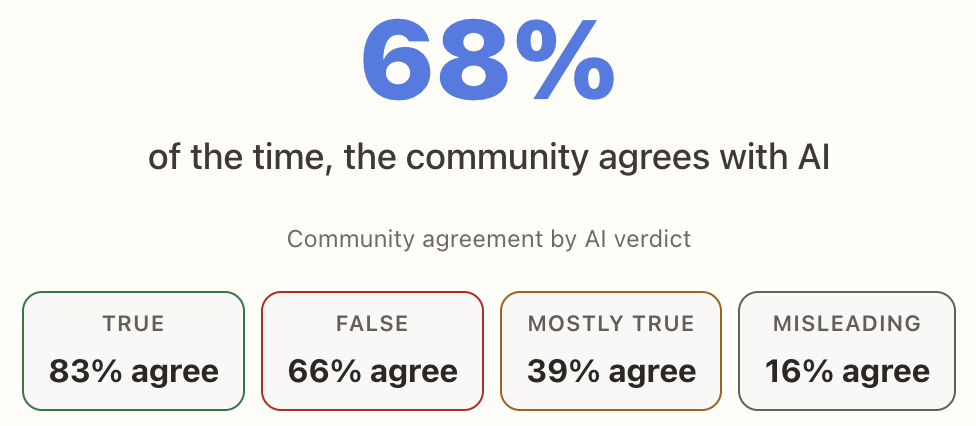

What Lenz Pulse shows at the time of writing this:

A featured "Where Humans and AI Disagree" section lists the claims with the biggest divergence right now.

The Mostly True row is the interesting one. 39% agreement means the majority of community readers disagree with the verification system on Mostly True verdicts. That's not a system failure — it's an artifact of how Mostly True verdicts are structured. They carry caveats that don't always land the same way with human readers as they do with the underlying evidence trace.

Evidence-base aging is one of the things that can push a verdict's community-vs-system agreement around over time. Lenz Pulse makes that visible while it happens.

The Mostly True verdict on humans-vs-bots is correct as of today. It may not be correct as of 2027, and that won't be a failure of the verification — it'll be the system doing its job, registering that the evidence base under a long-standing finding has shifted.

Verdicts are not stable forever. The ones in fast-moving fields need to be read with a date attached. The corpus shows you when they're holding and when they're moving.

Browse what's holding: Lenz Library. Browse what's diverging: Lenz Pulse

Verifications referenced in this post reflect Lenz's analysis at the time of publishing; verdicts can update as evidence accumulates, and the live claim pages always show the current state. The mechanic described — evidence-base aging — applies to any verdict in a fast-moving field. Tool and study citations reflect publicly available information as of May 2026.