Beyond Peer Review: How to Verify a Claim Before You Publish

Contents

- What Lenz actually does

- The 5-step workflow

- Step 1: Isolate the claim

- Step 2: Submit the claim

- Step 3: Read the Arguments as they surface — don't wait for the verdict

- Step 4: Use the Sources panel to build from evidence, not search

- Step 5: Act on the verdict

- What this looks like in practice

- Why the process matters as much as the verdict

Most researchers know how to evaluate a source.

You check the domain. You look for peer review. You scan the author's credentials, the publication date, the funding disclosures. This is good practice — and it's not enough.

Because the question "is this a credible source?" is different from the question "is this specific claim accurate?" And those two questions have very different answers, more often than most people expect.

A peer-reviewed journal can publish a finding that doesn't replicate. A well-cited textbook can carry a statistic that was quietly revised after it went to print. A respected analyst can mischaracterize a study's conclusions — not out of dishonesty, but because the gap between what a paper says and what people say it says is wider than it looks.

We built Lenz for that gap.

What Lenz actually does

Lenz is a claim verification platform. You paste a specific, declarative claim — not an article, not a topic, but a claim — and the system runs it through a structured evidence pipeline: research, debate, adjudication, verdict. Every source is cited. Every argument is visible. The reasoning is open.

The output isn't a credibility score for a source. It's a structured evidence review of the claim itself — the thing you're actually about to put your name on.

For researchers, analysts, and evidence-based writers, this is a different kind of tool.

The 5-step workflow

Step 1: Isolate the claim

Before you can verify anything, you need to identify what you're actually asserting. This sounds obvious — it isn't.

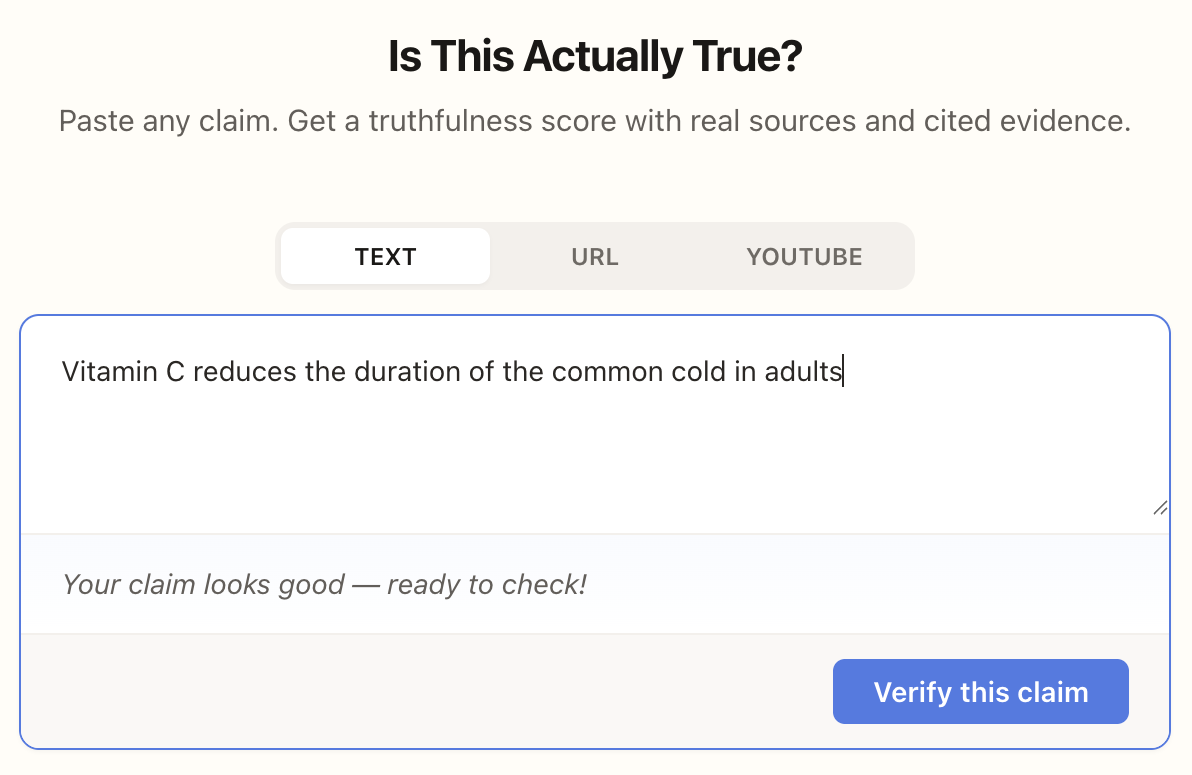

"This article discusses the relationship between vitamin C and immune function" is not a claim. "Vitamin C reduces the duration of the common cold in adults" is.

The more specific and falsifiable the claim, the more useful the verification. Vague claims produce vague verdicts. If you're citing a statistic, frame it as a specific assertion before you paste it. The precision you apply here carries through the entire process.

Step 2: Submit the claim

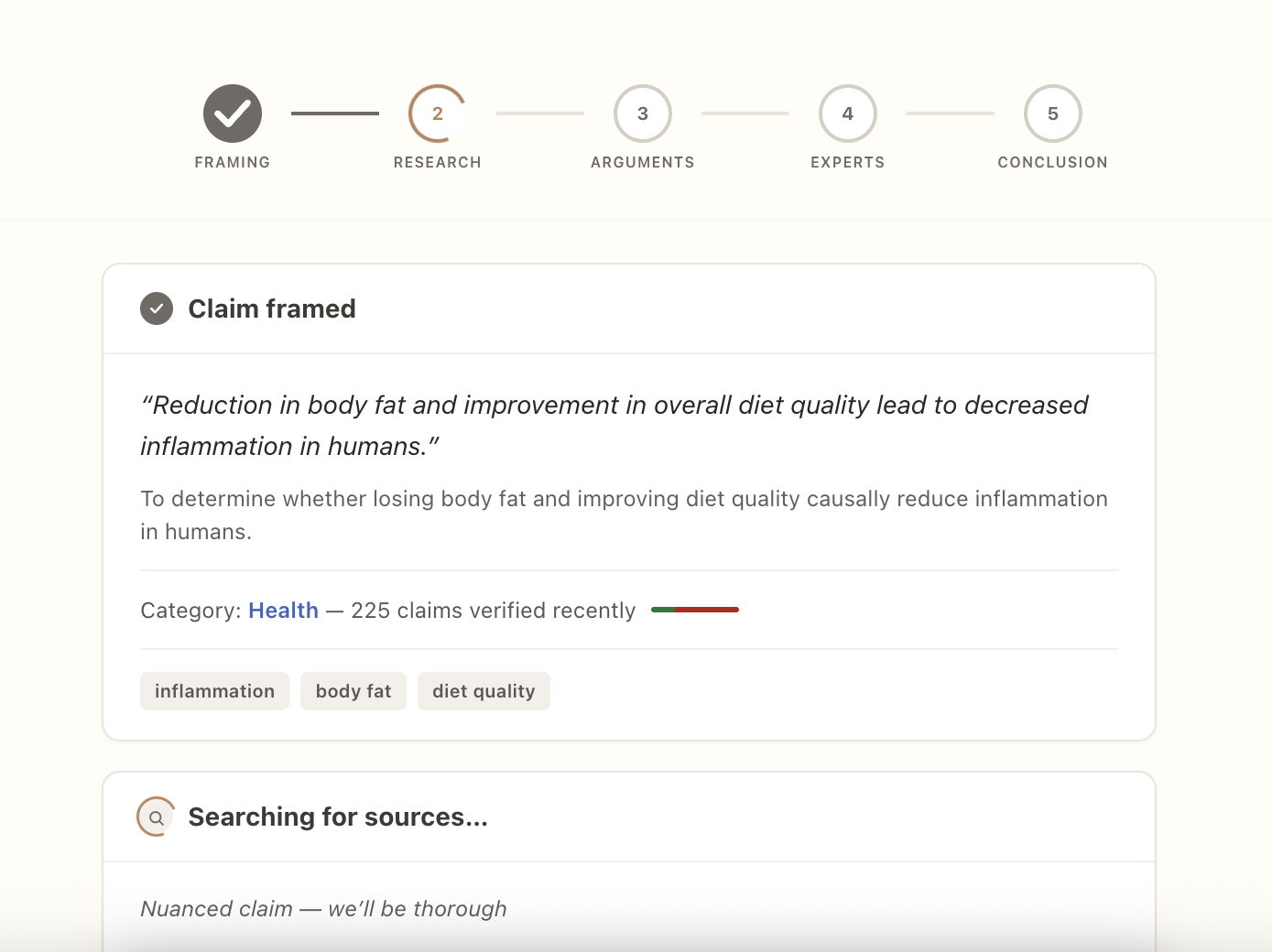

Paste the claim into Lenz. The pipeline starts immediately: the system frames the claim, pulls research from multiple independent sources, and constructs a structured debate — the strongest evidence for the claim, and the strongest evidence against it.

This takes 1 to 3 minutes. What you do with that time matters.

Step 3: Read the Arguments as they surface — don't wait for the verdict

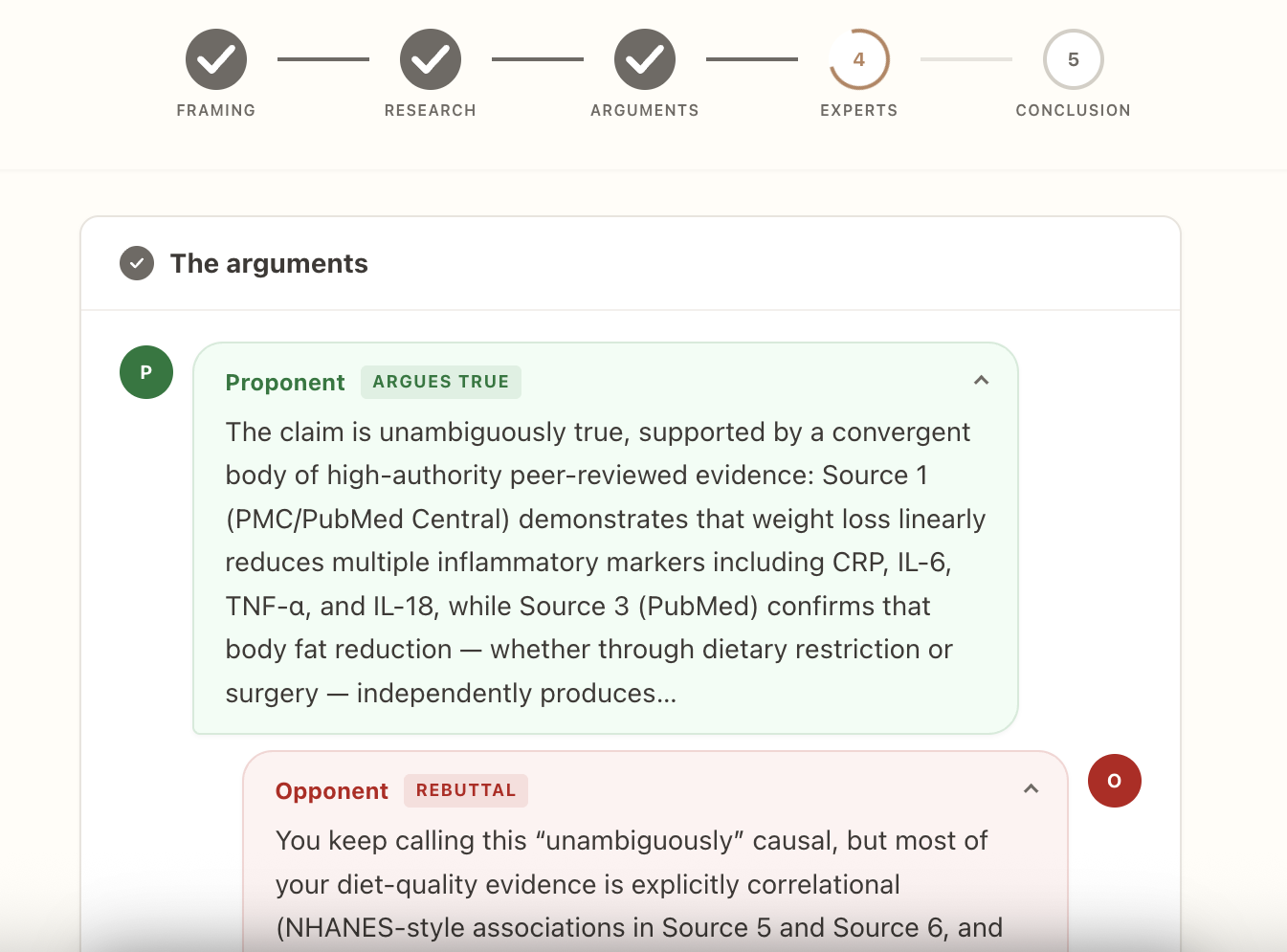

This is where most of the value lives, and where most people underuse the tool.

The Arguments panel populates in real time as the pipeline runs. Before the final verdict is delivered, you're already looking at the strongest peer-reviewed arguments on both sides of the claim — each sourced, each contextualized.

For the argument you're building:

The "for" side tells you where the claim is on solid ground. These are the sources and studies you'll want to cite directly. They've already been evaluated against your specific claim, not pulled from a general keyword search.

For the gaps you haven't addressed:

The "against" side surfaces the caveats, the contradictions, the studies that cut the other way. This is your peer reviewer, running ahead of schedule. If there's a limitation you haven't addressed, it will show up here. You can either incorporate it — add a qualifier, acknowledge the complexity — or revise the claim before you publish.

A practical example. We ran something that often circulates implicitly in research writing:

Peer review guarantees the accuracy of a published study's findings.False

The verdict was False. But the more useful output was the Arguments panel, which surfaced: reviewers' inability to verify raw data, documented bias and inconsistency in review outcomes, and Elsevier's own published acknowledgment that peer review is the best available method, not an error-proof one. That's not just a verdict — it's a set of sourced arguments you can use to write about why peer review matters and where it falls short, with far more precision than the original claim allowed.

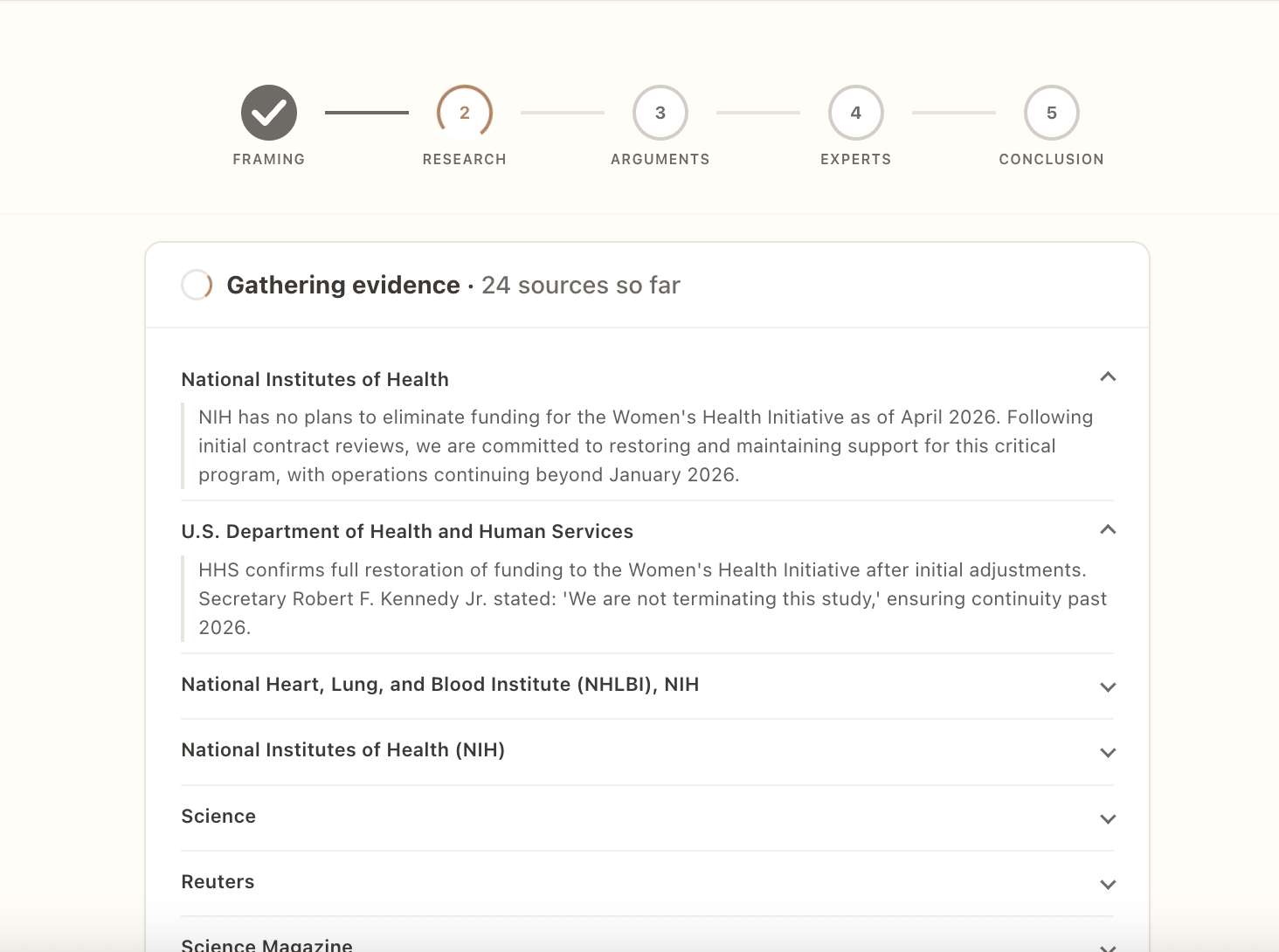

Step 4: Use the Sources panel to build from evidence, not search

Every source cited in the verification is linked — real studies, institutional reports, primary literature. These sources have already been evaluated against the specific claim you're researching.

Most research workflows start with a keyword search and filter down. Lenz inverts this: you start with a curated set of sources that have already been tested for relevance to your claim. Read them. Confirm they say what the verification says they say. Cite them directly.

This is faster than a literature search and more defensible than secondary sourcing. You're citing primary literature, not someone else's summary of it.

Step 5: Act on the verdict

The verdict gives you a clear signal for how to proceed:

- True / Mostly True: Cite with confidence. Use the Sources panel for bibliography provenance.

- Misleading: The claim is partially supported but missing critical context. The Arguments panel tells you exactly what's missing — add the qualifiers before you publish.

- False: Don't cite it. Revisit the argument you were building around it.

- Unverifiable: The evidence doesn't settle the question. That's still useful — it tells you the claim is contested, and publishing it without qualification would overstate what the evidence supports.

What this looks like in practice

Two more examples from research-adjacent claims where the verdict alone undersells what the process surfaces.

This is widely assumed. The Lenz verdict is Mostly True — for the inverted claim. Human users remain the primary drivers of misinformation spread, and the research on this is more robust than most people realize: false news diffusion patterns persist even after removing bot accounts entirely. The nuance — that bots punch above their weight in specific contexts, and that AI-generated content is narrowing the gap — is the kind of thing that doesn't make headlines but belongs in any serious piece on the topic.

Verdict: Mostly True. The evidence is consistent across cancer types, with hazard ratios ranging from 2.0 to 5.68 in peer-reviewed studies. The qualifier the Arguments panel surfaces: the strongest effect sizes apply specifically to curable or nonmetastatic cancers. The survival gap is driven by refusal of proven therapies, not direct harm from alternative modalities. That distinction matters if you're writing about this claim in any serious context.

Why the process matters as much as the verdict

Most fact-checking tools give you a label. Lenz gives you the evidence structure behind it — the research, the debate, the source set, the reasoning chain. For anyone who writes with precision about contested topics, this is the difference between knowing a claim is false and being able to explain exactly why, with sources.

The verdict is the output. The Arguments panel is the work.

Lenz is free to start. Verify any statement.